Projects

My accomplishments so far

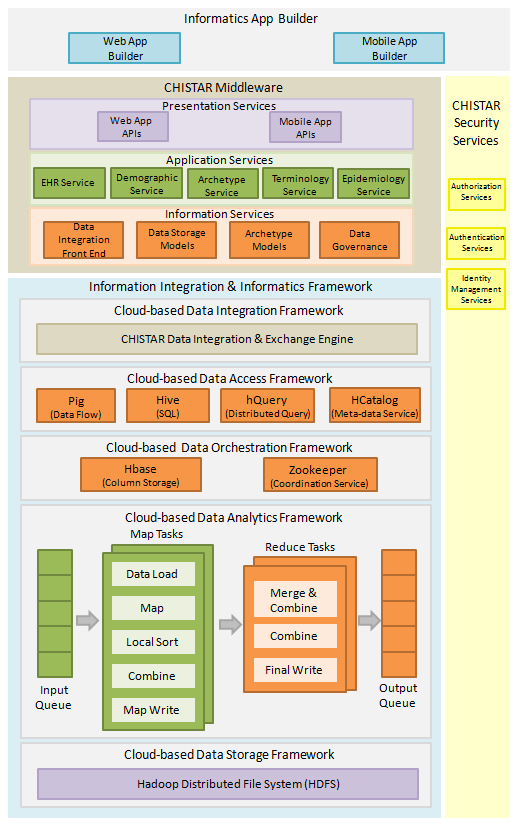

Information Integration & Informatics Framework

Get published PDF here

Information Integration and Informatics (III) framework for healthcare applications that leverages the parallel computing capability of a computing cloud based on a large-scale distributed batch processing infrastructure that is built of commodity hardware.

Healthcare information integration and informatics presents a potential for building advanced healthcare applications given the massive scale of data which is collected by EHR systems. Traditional EHR systems are based on different EHR standards, different languages and different technology generations. EHRs are mainly designed to store individual-level data on patient-provider interactions. EHRs capture and store information on patient health and provider actions. III framework can be used for developing advanced healthcare applications that are backed by massive scale healthcare data integrated from heterogeneous and distributed healthcare systems and a scalable cloud infrastructure.

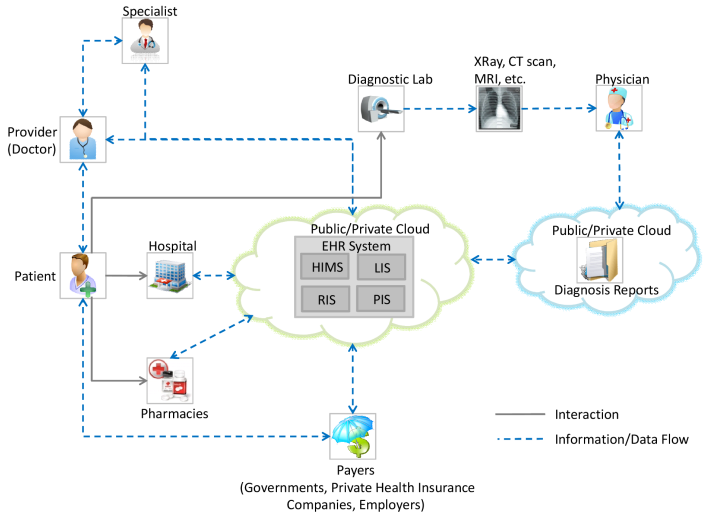

CHISTAR - Cloud EHR

Get published PDF here

Cloud Health Information Systems Technology Architecture (CHISTAR) is a cloud-based EHR system.

CHISTAR addresses the problems faced by traditional client-server EHR systems. CHISTAR adopts a cloud-based approach for the design of an interoperable EHR system. Cloud computing environments provide several benefits to all the stakeholders in the healthcare ecosystem (patients, providers, payers, etc.). Lack of data interoperability standards and solutions has been a major obstacle in the exchange of healthcare data between different stakeholders. CHISTAR achieves semantic interoperability through the use of a generic design methodology which uses a reference model that defines a general purpose set of data structures and an archetype model that defines the clinical data attributes. CHISTAR application components are designed using the Cloud Component Model approach that comprises of loosely coupled components that communicate asynchronously. CHISTAR has better interoperability, scalability, maintainability, portability, accessibility and reduced costs as compared to traditional client-server EHR systems.

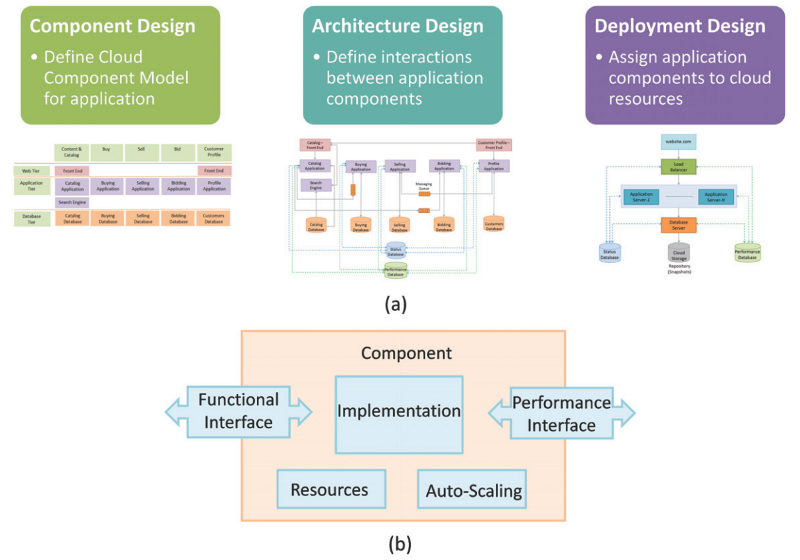

Cloud Component Model

Get published PDF here

Cloud Component Model (CCM) is generic application modeling & design methodology for complex multi-tier applications

deployed in cloud computing environments.

Deploying muti-tier business, retail, financial or social networks on the computing cloud is a complex, expensive and challenging task. While frameworks such as Software as a Service (SaaS) or Platform as a Service (PaaS) appear to offer a pathway to rapid deployment, on closer examination these approaches are both language-specific and architecture-specific, and often tied to a vendor through use of predefined application templates. The authors propose a new approach to constructing, deploying and optimizing complex cloud-based applications in a language-independent and vendor-neutral manner, allowing rapid prototyping of business applications in a manner that assists targeted performance optimization. The proposed approach, Cloud Component Model (CCM), relies on logical functional decomposition of the application, utilizing loosely coupled components in a manner that realizes the promise of the cloud, while freeing the design from the restrictive constraints of a particular programming style or architectural pattern. Our experience using a complex e-Commerce benchmark provides describes CCM's application and resulting benefits in terms of reduction in prototyping time, testing cost, and performance.

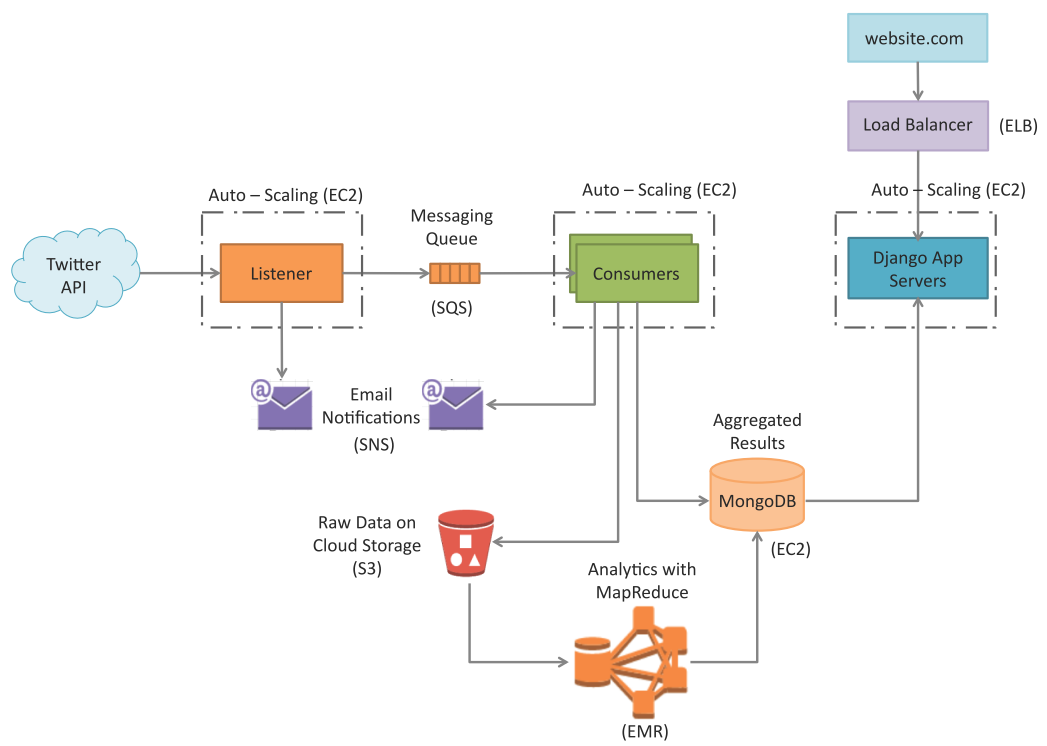

Social Media Analytics Platform

Social Media Analytics platform collects social media feeds (Twitter tweets) on a specified keyword in real time and analyzes the sentiments of the tweets and provides aggregate results.

CloudView

Get published PDF here

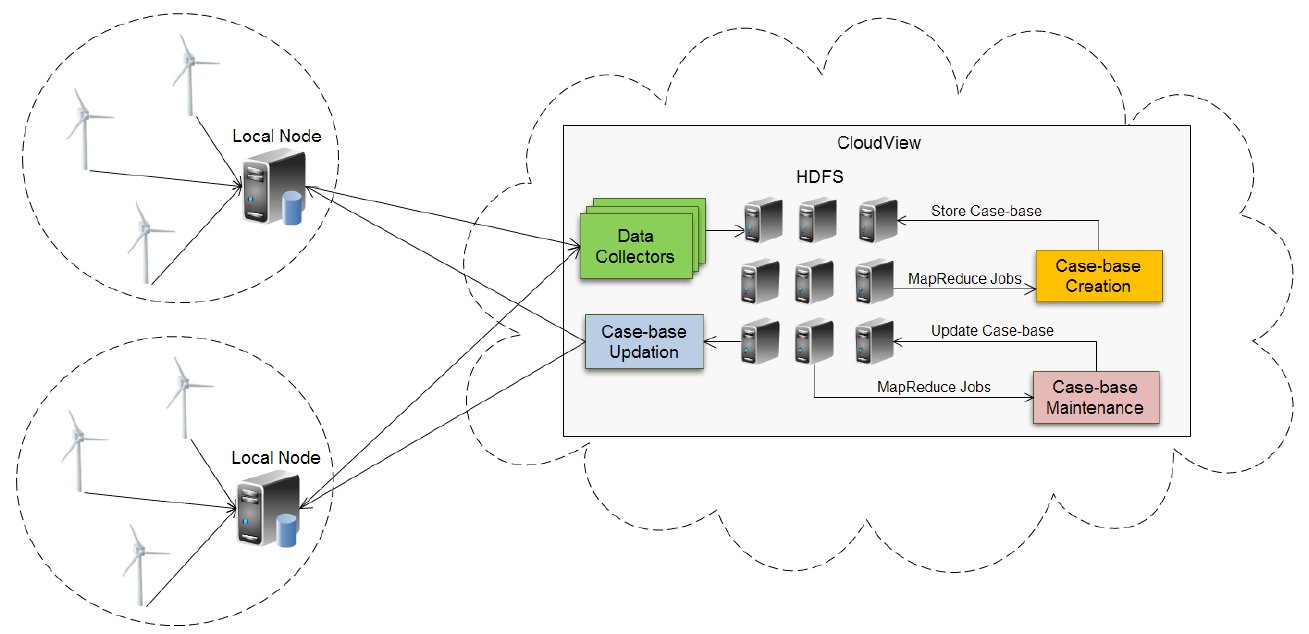

CloudView is a framework for

storage, processing and analysis of massive machine maintenance

data, collected from a large number of sensors embedded in

industrial machines, in a cloud computing environment.

CloudView framework leverages the

parallel computing capability of a computing cloud based on

a large-scale distributed batch processing infrastructure that is

built of commodity hardware. A case-based reasoning (CBR)

approach is adopted for machine fault prediction, where the past

cases of failure from a large number of machines are collected in

a cloud. A case-base of past cases of failure is created using the

global information obtained from a large number of machines.

CloudView facilitates organization of sensor data and creation

of case-base with global information. Case-base creation jobs

are formulated using the MapReduce parallel data processing

model. CloudView captures the failure cases across a large

number of machines and shares the failure information with

a number of local nodes in the form of case-base updates that

occur in a time scale of every few hours. At local nodes, the

real-time sensor data from a group of machines in the same

facility/plant is continuously matched to the cases from the casebase

for predicting the incipient faults - this local processing

takes a much shorter time of a few seconds. The case-base is

updated regularly (in the time scale of a few hours) on the cloud

to include new cases of failure, and these case-base updates

are pushed from CloudView to the local nodes. Experimental

measurements show that fault predictions can be done in realtime

(on a timescale of seconds) at the local nodes and massive

machine data analysis for case-base creation and updating can

be done on a timescale of minutes in the cloud. Our approach, in

addition to being the first reported use of the cloud architecture

for maintenance data storage, processing and analysis, also

evaluates several possible cloud-based architectures that leverage

the advantages of the parallel computing capabilities of the cloud

to make local decisions with global information efficiently, while

avoiding potential data bottlenecks that can occur in getting the

maintenance data in and out of the cloud.

CloudTrack

Get published PDF here

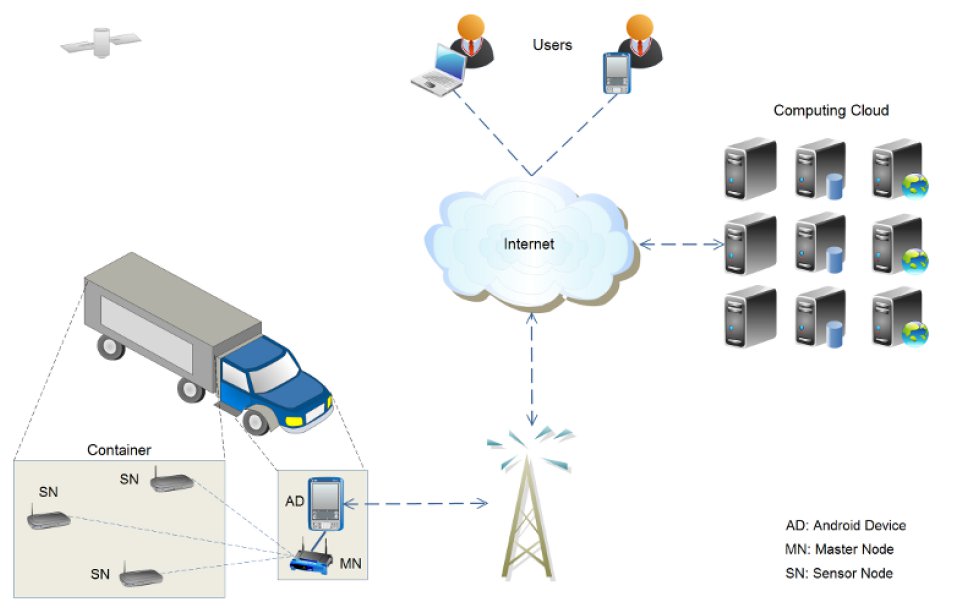

CloudTrack is a cloud based IT framework for data driven intelligent transportation systems.

CloudTrack can be used for

real-time fresh food supply tracking and monitoring. CloudTrack allows efficient storage, processing and analysis of realtime

location and sensor data collected from fresh food supply

vehicles. CloudTrack uses a dynamic vehicle routing approach

where the alerts trigger the generation of new routes.

CloudTrack provides the global information of the entire fleet

of food supply vehicles and can be used to track and monitor

a large number of vehicles in real-time. CloudTrack facilitates

organization of sensor data and creation of alerts with global

information.

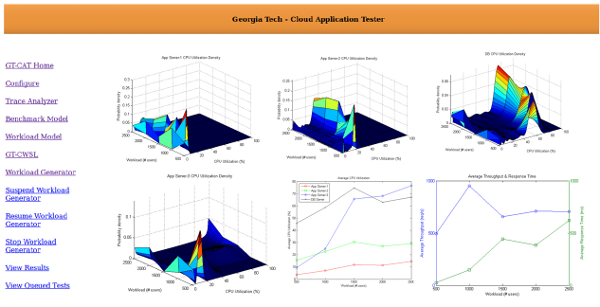

GT-Cloud Application Tester

Get published PDF here

GT-Cloud Application Tester (GT-CAT) is a set of tools for performance evaluation, deployment

prototyping and bottleneck detection for multi-tier applications deployed in cloud

computing environments.

Complex multi-tier applications deployed in cloud

computing environments can experience rapid changes in their

workloads. To ensure market readiness of such applications,

adequate resources need to be provisioned so that the applications

can meet the demands of specified workload levels and at

the same time ensure that service level agreements are met.

Multi-tier cloud applications can have complex deployment

configurations with load balancers, web servers, application

servers and database servers. Complex dependencies may exist

between servers in various tiers.

GT-CAT uses a performance testing approach based on synthetic workloads to support provisioning and

capacity planning decisions.

Accuracy of a performance

testing approach is determined by how closely the generated

synthetic workloads mimic the realistic workloads. GT-CAT allows rapid deployment prototyping that can help in choosing the best and

most cost effective deployments for multi-tier applications that

meet the specified performance requirements. With GT-CAT, complex deployments can be created rapidly, and

a comparative performance analysis on various deployment

configurations can be accomplished.

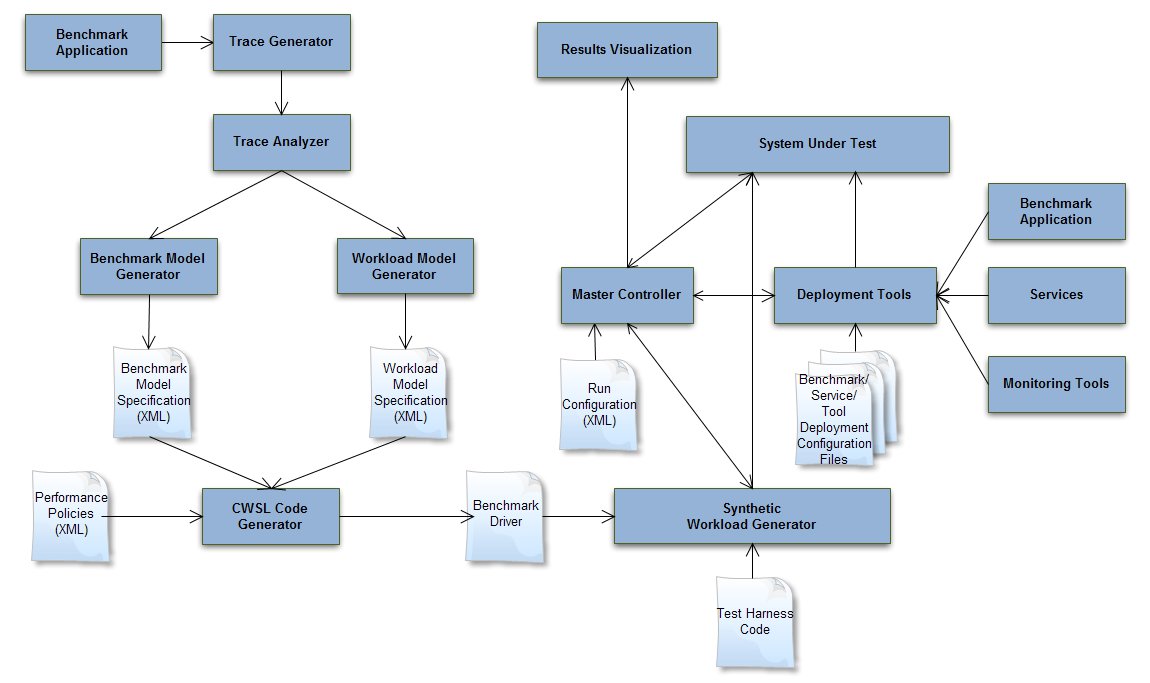

Synthetic Workload Generator for Cloud Applications

Get published PDF here

Synthetic Workload Generator (SWG) generates synthetic workloads for stress testing of multit-tier cloud applications.

SWG uses an approach in which workloads of cloud computing applications are captured in two different models - benchmark application and workload models.

SWG accepts the benchmark and workload model specifications generated by the characterization and modeling of workloads of cloud computing applications.

Synthetic workload generation techniques are required for performance evaluation of complex multi-tier applications such as e-Commerce, Business-to-Business, Banking and Financial, Retail and Social Networking applications deployed in cloud computing environments. Each class of applications has its own characteristic workloads. There is a need for automating the process of extraction of workload characteristics from different applications and a standard way of specifying the workload characteristics that can be used for synthetic workload generation for evaluating the performance of applications. The performance of complex multi-tier systems is an important factor for their success. Therefore, performance evaluations are critical for such systems. Provisioning and capacity planning is a challenging task for complex multi-tier systems as they can experience rapid changes in their workloads. Over-provisioning in advance for such systems is not economically feasible. SWG helps in performance evaluation of multi-tier cloud applications by

generating synthetic workloads that have similar charateristics as real workloads.

OpenCLosE

Get published PDF here

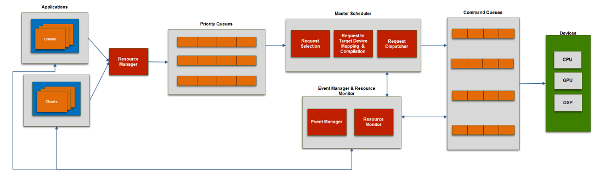

OpenCLosE is a framework for dynamic

resource management and scheduling of applications written in

open compute language (OpenCL) for heterogeneous multimedia

and graphics platforms, such as those found in multimedia smartphones

and automotive infotainment clusters.

Although OpenCL provides flexibility to applications for parallel processing on heterogeneous

platforms, the complexity of managing resources for

multiple applications and scheduling the kernels from different

applications on different processors becomes high. OpenCLosE comprises of a resource manager and master scheduler that allows efficient realization of multiple

applications within a multitasked platform.

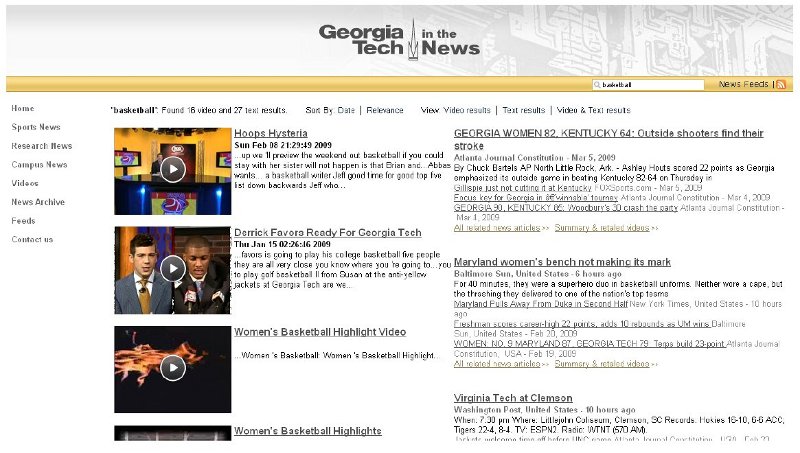

Context-based Video Search

Get published PDF here

Context based video search approach uses contextual cues to improve search precision.

This approach differs from content based approach as it uses story-level contextual

cues, instead of (or supplementing) the shot-level visual or semantic content, for video

search. The contextual cues intuitively broaden query coverage and facilitate multi-

modal search.

The objective of this project was to design a real time and automated video clustering and search system that provides users of the search engine the most relevant

videos available that are responsive to a query at a particular moment in time, and

supplementary information that may also be useful. Methods

to mitigate the effect of the semantic gap faced by current content based video search

approaches were proposed. A context-sensitive video ranking scheme is used, wherein the context is

generated in an automated manner.

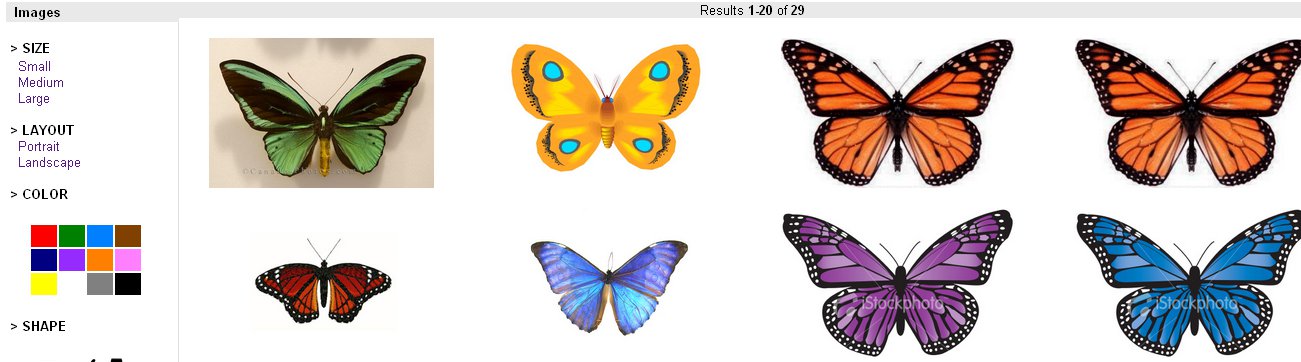

Prakarsh Similar Image Search

Prakarsh is a content based image retrieval and mining system.

Prakarsh is based on scalable solution for efficient retrieval of images

from large image databases using image features such as color, shape and texture.

Prakarsh uses a framework for automatic labeling of images and clustering of meta data in database

based on the dominant shapes, textures and colors in the image.

The users of this system can input a query image and select similar image retrieval

criteria by selecting a feature type from amongst color, texture or shape. The system

retrieves images from the database that match the specified pattern and displays them

by relevance.

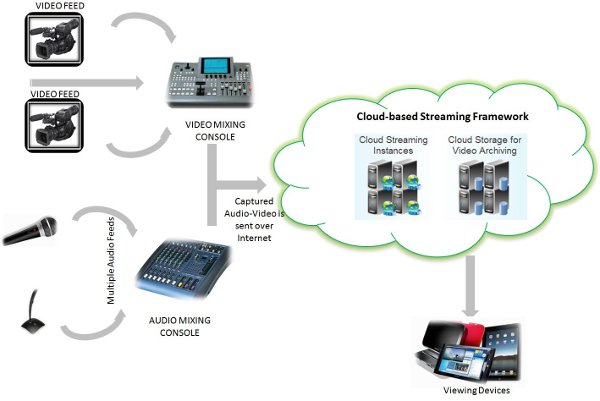

Cloud-based Live Streaming Framework

Cloud-based Live Streaming Framework allows on-demand creation of video streaming instances in the cloud.

Events captured live by high-definition cameras are encoded using video encoder devices in industry-standard H.264 and VP6 video codecs. The encoded video streams are transmitted by a wireless broadband connection to a computing-cloud. On the cloud, streaming instances are created on-demand and the streams are then broadcast over the internet. The streaming instances also record the event streams which are later moved to the cloud storage for video archiving.

Real Time Messaging Protocol (RTMP) which is a protocol for streaming audio, video and data over the Internet, between a Flash player and a server.

Live streamed events can be viewed by audiences around the world on different types of devices connected to the

internet such as laptops, netbooks, desktops, tablets, smartphones, internet-TVs, etc.

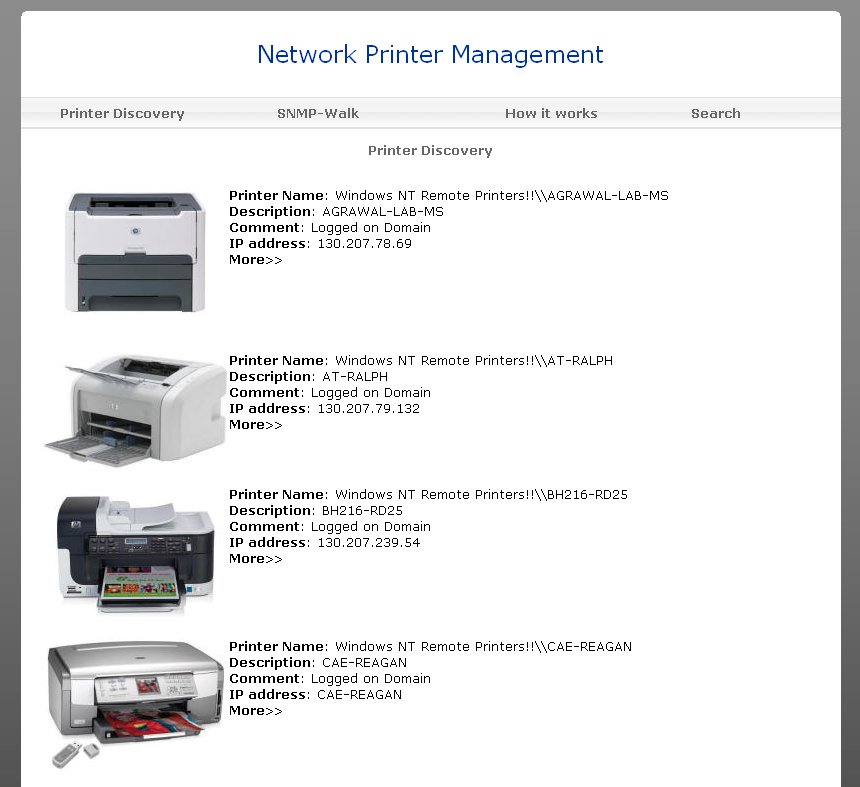

Network Printer Manager

Watch demo video here

Network Printer Manager (NPM) is a web based tool which can manage any kind of printer on the network at your location supporting SNMP.

Printers are useful output devices which consume a lot of resources and have mechanical problems. Often they produce excess waste due to lack of proper management of printer information. In the management of the physical printing device the description, status and alert information concerning the printer and its various subparts has to be made available to the management application so that it can be reported to the end user. There are many printer management tools in the market that provide complete printer device management capabilities; however, most of the printer management tools available are vendor specific. Managing different models of printers from different vendors is a challenging task. Also, they have proprietary standalone user interfaces and lack an integrated web-based solution for centralized printer management in a company. NPM tool can discover, configure, manage and troubleshoot printers connected over a network.

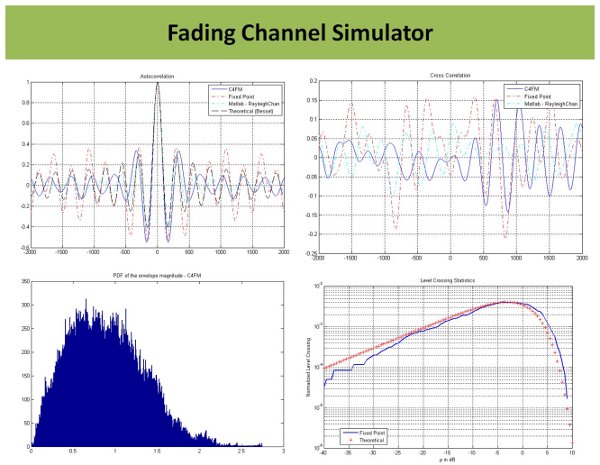

Fading Channel Simulator

Fading Channel Simulator (FCS) is a tool for simulating the effects of fading in a communication channel due to multi-path propagation.

Multipath fading occurs when several reflected versions of a transmitted radio signal arrive at the receiver.

Movement of the receiver causes the number of multipath components, as well as their phases and propagation delays, to vary with time.

The multiple signals can add constructively or destructively, causing the power level at the receive antenna to fluctuate over a large dynamic range.

This fluctuation causes an increased bit error rate (BER) relative to the classical additive white Gaussian noise channel.

FCS is based on Rayleigh Fading model. Rayleigh fading is a statistical model for the effect of a propagation environment on a radio signal.

Rayleigh fading is a reasonable model for the effect of heavily built-up urban environments on radio signals and is applicable when there is no dominant propagation along a line of sight between the transmitter and receiver.